I have written an application which creates pools of 1000 neural networks. One test performs backpropagation training on them. A second test performs backpropagation, and a genetic algorithm. The amount of times training is called for each test is the same. The genetic algorithm seems to actually be able to converge on a lottery ticket and seems to always outperform backpropagation training alone.

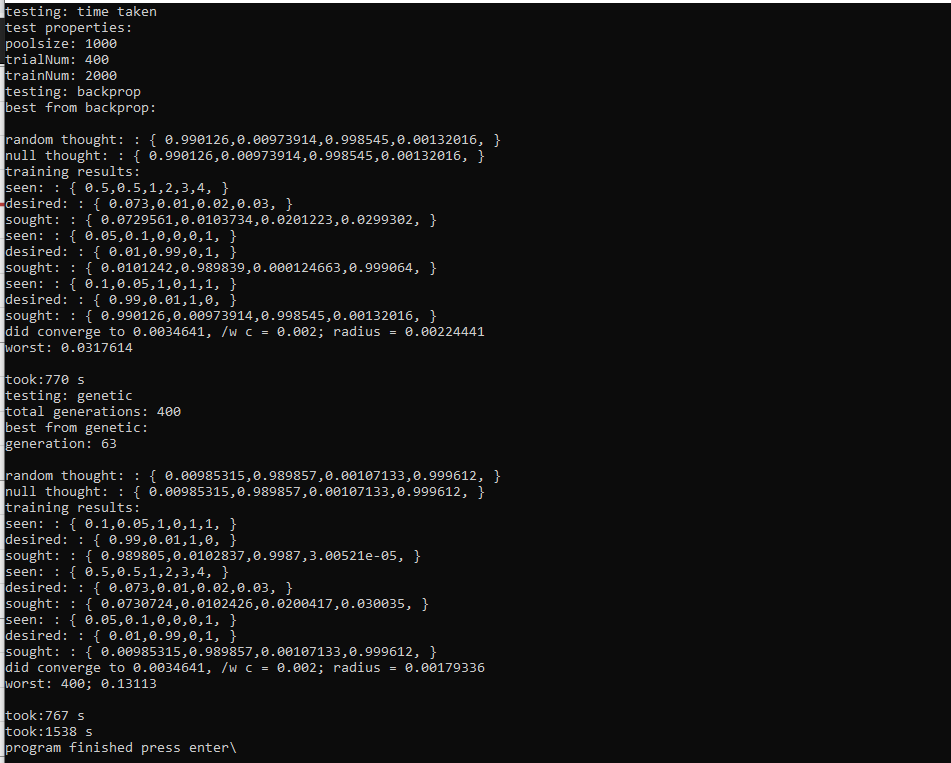

Here is example output which will be explained:

In this example there is a total network poolsize of 1000 random networks. This network pool size is used for both the tests. To compare networks, their radius is examined, and the smaller it is, the better.

In the first test, backpropagation, the best network converges pretty well, to 0.0022~. The wost network, however, converges to 0.031~, a much worse solution. The amount of training called on the networks is constant, and yet there is a vast difference in performance based on Lottery Ticket, in a pool of just 1000 networks.

Lottery Ticket means that more ideal networks can be found and distributed periodically. The thing is, backpropagation, in its most simple form, is not a Lottery Ticket Algorithm. Genetic Algorithms are indeed a Lottery Ticket Algorithm, and it seems that backpropagation can be put into them naturally. This causes networks to evolve and change in ways that backpropagation does not even consider, for example: neuron biases. It also makes backpropagation consider itself, as it is performed socially among a pool of existent networks.

The second test is genetic. It starts from scratch with 1000 new random networks, runs for 400 generations, and calls training (backpropagation) the same amount of times as the earlier test. On generation 65, a Lotto is won for a particular network, and it ends up outperforming all others found in either tests. The amount of trainings on the winning network is the sum of its parents and the remaining trainings it did. That winning network, went into all future generations along with various other algorithm and mutations of networks. I guess I am going to consider this a Lottery Network.

What we need to do is somehow, find these networks that exist, maybe, or could, but are hard to find, and the search could never end. I think to do both backpropagation and genetic algorithms and other functions such as pruning, growth, or other mutation. What else can be used? It seems like there are likely concepts in math or other sciences which could contribute. Recently, in creating mutation functions, I applied a normal distribution function and am performing math on normal distributions, which is new to me, or what else could be available or how this should evolve is unknown.

the basic normal distribution function I’ve made looks like the following on desmos:

https://www.desmos.com/calculator/jwblzdekz0

written in C++ like:

std::normal_distribution<long double> normal_dist(0.22);

auto normal_mostlyZero_seldomOne = [&]()->long double {

return std::abs((normal_dist(random)/2.0));

};

It is used to ‘decide’ when to mutate something and by what magnitude. Most of the time this magnitude is small and otherwise approaches one. It seems like evolutionary algorithms could be benefited with the study of probability maths or other maths and I may do this at some point.

Next is to add methods of pruning and growth to networks to further investigate Lottery Ticket.